Those who are pursuing a career in data analytics or data science are likely familiar with the many relevant skills needed to be successful in this demanding field. Yet while the level of required knowledge and practical abilities may feel overwhelming to some, Alice Mello—assistant teaching professor for the analytics program within Northeastern’s College of Professional Studies—recommends all aspiring data professionals start with the basics.

“If you want to break into the area of data analytics, you need to have a passion for data and a passion for facts,” she says. “It’s not just about crunching numbers. By making sense of data, you are translating it into fact, drawing conclusions, and using those conclusions to create and tell stories.”

Luckily, those who take the time to understand the role that statistical modeling plays in data analytics—and the ways in which different modeling techniques can be used to analyze and manipulate data—will have the context needed to do just that.

Download Our Free Guide to Breaking Into Analytics

A guide to what you need to know, from the industry’s most popular positions to today’s sought-after data skills.

What is Statistical Modeling and How is it Used?

Statistical modeling is the process of applying statistical analysis to a dataset. A statistical model is a mathematical representation (or mathematical model) of observed data.

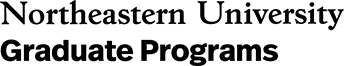

When data analysts apply various statistical models to the data they are investigating, they are able to understand and interpret the information more strategically. Rather than sifting through the raw data, this practice allows them to identify relationships between variables, make predictions about future sets of data, and visualize that data so that non-analysts and stakeholders can consume and leverage it.

“When you analyze data, you are looking for patterns,” says Mello. “You are using a sample to make an inference about the whole.”

3 Reasons to Learn Statistical Modeling

While data scientists are most often tasked with building models and writing algorithms, analysts also interact with statistical models in their work on occasion. For this reason, analysts who are looking to excel should aim to obtain a solid understanding of what makes these models successful.

“As machine learning and artificial intelligence become more commonplace, more and more companies and organizations are leveraging statistical modeling in order to make predictions about the future based off data,” Mello says. “[So] if you work in the area of data analytics, you need to understand how the underlying models work…No matter what kind of analysis you are doing or what kind of data you are working with, you are going to need to use statistical modeling in some way.”

Below are some of the benefits that come from having a thorough understanding of statistical modeling.

1. You will be better equipped to choose the right model for your needs.

There are many different types of statistical models, and an effective data analyst needs to have a comprehensive understanding of them all. In each scenario, you should be able to identify not only which model will help best answer the question at hand, but also which model is most appropriate for the data you’re working with.

2. You will be better able to prepare your data for analysis.

Data is rarely ready for analysis in its raw form. To ensure your analysis is accurate and viable, the data must first be cleaned up. This cleanup often includes organizing the gathered information and removing “bad or incomplete data” from the sample.

“Before any statistical model can be completed, you need to explore [and], understand the data,” says Mello. “If there is no quality [in the data], then you can’t really derive any insights from it.”

Once you know how various statistical models work and how they leverage data, it will become easier for you to determine what data is most relevant to the question you are trying to answer, as well.

3. You will become a better communicator.

In most organizations, data analysts are required to communicate their findings with two different audiences. The first audience consists of those on the business team who don’t need to understand the details of your analysis, but simply want to know the key takeaways. The second audience consists of those who are interested in the more granular details; this group will want both the list of broad conclusions and an explanation of how you reached them.

Having a thorough understanding of statistical modeling can help you better communicate with both of these audiences, as you will be better equipped to reach conclusions and therefore generate better data visualizations, which are helpful in communicating complex ideas to non-analysts. Simultaneously, a complex understanding of how these models work on the backend will allow you to generate and explain those more granular details when necessary.

Important Statistical Techniques in Data Analysis

Before any statistical model can be created, an analyst needs to collect or fetch the data housed on a database, clouds, social media, or within a plain excel file. To do this, analysts must also have a solid grasp of data structure and management, including how and where data is stored, fetched, and maintained. Those working in this field should thus share a passion for facts and data, and understand the basics of data manipulation, as well.

Once it comes time to analyze the data, there are an array of statistical models analysts may choose to utilize. According to Mello, most common techniques will fall into the following two groups:

- Supervised learning, including regression and classification models.

- Unsupervised learning, including clustering algorithms and association rules.

Regression Models

Data analysts use regression models to examine relationships between variables. Regression models are often used by organizations to determine which independent variables hold the most influence over dependent variables—information that can be leveraged to make essential business decisions.

“The most traditional regression models that have been used for a long time are logistic regression, linear regression, and polynomial regression,” Mello says. “These are the most common.”

Other examples of regression models can include stepwise regression, ridge regression, lasso regression, and elastic net regression.

Classification Models

Classification is a process in which an algorithm is used to analyze an existing data set of known points. The understanding achieved through that analysis is then leveraged as a means of appropriately classifying the data. Classification is a form of machine learning that can be particularly helpful in analyzing very large, complex sets of data to help make more accurate predictions.

“Classification models are a form of supervised machine learning which is often used when the analyst needs to understand how they got to a certain point,” Mello says. “They give you more than just an output; [they give you] more information that you can use to explain the results of the prediction to your boss or stakeholder.”

Some of the most common classification models include decision trees, random forests, nearest neighbor, and Naive Bayes.

There are also the neural networking models that are more used in AI. “These are very powerful models, and they can make accurate predictions very well,” Mello says, “but you typically cannot explain what is happening behind the scenes.”

Digging In Deeper: The unknown process that takes place with this model can be compared to putting raw dough into one side of a black box and getting freshly baked bread out the other side. Because you understand the inputs (dough) and outputs (bread) you can make certain assumptions about what happened inside the box—the dough was cooked—but the exact mechanism of how this happened cannot be known.

Learning Statistical Modeling Techniques

For those who are ready to explore statistical modeling techniques and advance in your analytics career, earning a master’s degree in analytics is one of the most efficient ways to gain these skills. However, not all analytics programs are created equally, Mello says, so it’s important that professionals are selective when choosing a program.

To best align your experience in graduate school with your career goals as an analyst, Mello suggests seeking programs that incorporate machine learning into the curriculum. As this trend continues to evolve, more and more organizations are expected to hire data analysts who understand the underpinnings of these systems. In fact, machine learning is in such high demand that those with a thorough understanding can expect to earn an average salary of close to $113,000 per year.

Additionally, those who have a bachelor’s degree in mathematics, computer science, or engineering, and a firm understanding of statistical modeling—alongside the algorithms and machine learning that support the various models—may be able to leverage that understanding into a data scientist career. This is a strategic move for increased salary potential.

“Not all data analytics programs will cover machine learning,” Mello says, “but here at Northeastern we do because of the increased opportunities that it can offer graduates.”

Other considerations to keep in mind when choosing an analytics program to enroll in include:

- Experiential learning opportunities: Does the program offer you ample opportunities to put your lessons into practice through real, hands-on situations that can help you build your skills?

- Relevant curriculum: As data analytics is a rapidly evolving field, it’s important that any program you are considering is capable of keeping up with industry trends.

- Industry-sourced faculty: Learning directly from faculty members who have experience in the industry offers students access to valuable networking opportunities that can be helpful during the job search. Learning from industry leaders also allows students to gain exposure to cutting edge instruction developed directly from real-world experience.

Learn more about advancing your career with a Master of Professional Studies in Analytics from Northeastern.

Related Articles

Is a Data Analytics Bootcamp Worth It?

What is Learning Analytics & How Can it Be Used?

How to Get Into Analytics: 5 Steps to Transition Careers